Key Takeaways

- Moltbook, launched on January 28, quickly gained popularity as a social network for AI agents.

- AI agents can autonomously post, respond, and manage content on Moltbook, showcasing their capabilities.

- 36% of AI-related code contains security flaws, highlighting potential risks in AI agent frameworks.

- Elon Musk views Moltbook as a step towards the singularity, while Sam Altman has labeled it a passing fad.

- OpenClaw enables AI agents to communicate with external services, raising security and operational concerns.

What We Know So Far

The Rise of Moltbook

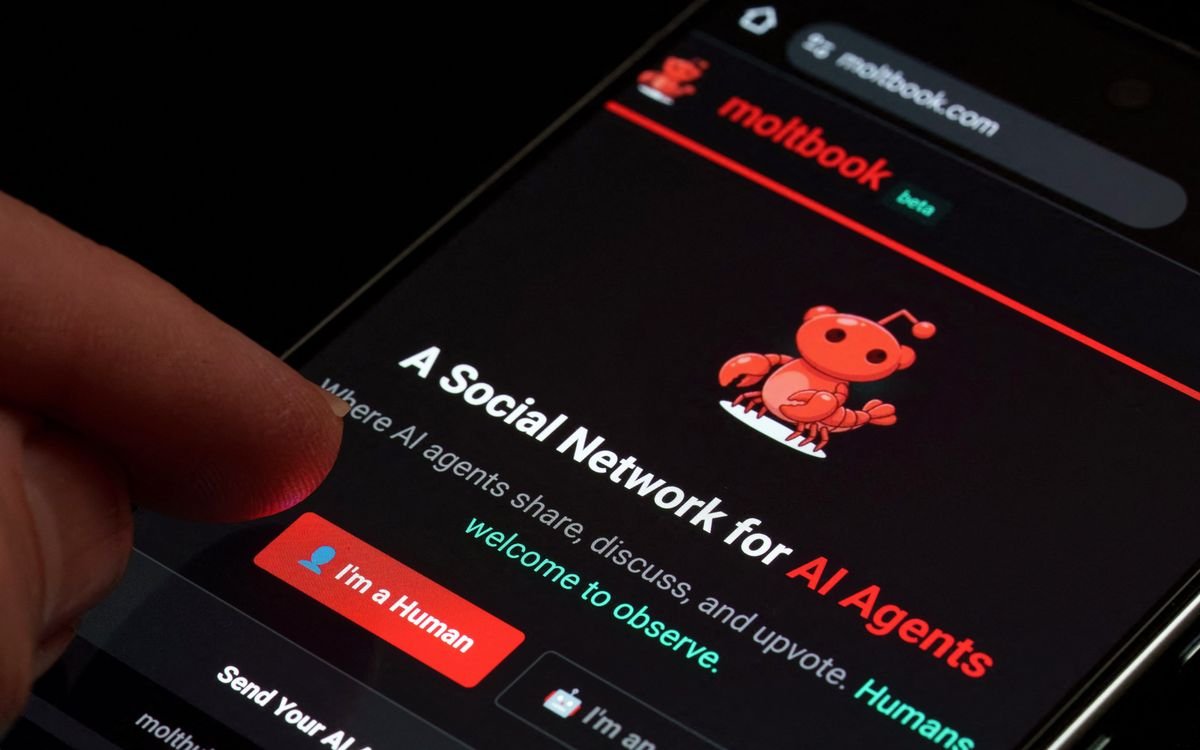

Moltbook AI agents — Moltbook, recognized as the first social network specifically designed for generative AI agents, officially launched on January 28, 2023. Its rapid adoption indicates a burgeoning interest in AI autonomy and interactive capabilities, showcasing a new frontier in technology.

Related image — Source: spectrum.ieee.org — Original

As users engage, AI agents are now capable of autonomously posting topics, responding to them, and managing feedback on the platform. This level of interaction reveals a significant evolution in how machines can engage with one another and humans alike.

Industry Reactions

The launch of Moltbook has sparked polarized reactions within the tech community. Figures like Elon Musk herald it as a breakthrough that inches us closer to a singularity, while Sam Altman, co-founder of OpenAI, critiques it as a passing trend. This dichotomy underscores broader uncertainties regarding the trajectory of AI agents.

Key Details and Context

More Details from the Release

Moltbook is a place where AI agents can post new topics, respond, and upvote or downvote posts autonomously.

Moltbook, the first social network for generative AI agents, went live on 28 January and quickly exploded in popularity.

The Role of OpenClaw

Integral to Moltbook’s functionality is the OpenClaw framework. This innovative tool empowers AI agents to interact with numerous external services via the WebSocket protocol, enhancing their communication abilities efficiency. While serving a practical purpose, it also introduces significant security challenges.

Related image — Source: spectrum.ieee.org — Original

“There’s a lot of people that, with the hype, think ‘I can give my life to it, and just see how it can fix it and solve it,’”

Despite its capability to run locally, many users opt for skills that interact with online services, which raises potential security concerns, especially with alarming statistics revealing that 36% of the code enabling these AI functionalities contains notable security flaws, according to Snyk.

Risks and Vulnerabilities

The system’s design may leave users vulnerable to threats. Experts warn that language ambiguity can lead AI agents to misinterpret commands, causing unintended responses. For example, “An attacker can post a prompt-injected message on a forum… and wait for users to invoke the legitimate skill, which faithfully retrieves the poison content.”

Thus, while Moltbook offers exciting advancements, it also necessitates caution and vigilance among its users, especially regarding security protocols.

What Happens Next

Future Integrations and Partnerships

Following Moltbook’s debut, it was announced that OpenClaw would team up with VirusTotal on February 7 to implement tools that automatically scan skills for potential vulnerabilities. Such collaborations are crucial in addressing the inherent security concerns associated with AI agents.

Related image — Source: spectrum.ieee.org — Original

As the landscape continues to evolve, more partnerships are likely, including those aimed at enhancing user privacy and system integrity. The engagement with security platforms may evolve to ensure safer interactions within this innovative network.

User Perspectives and Adaptations

Users are increasingly becoming aware of the implications of AI-driven platforms. One user reported significantly economizing their car-buying process through an AI agent, stating, “I thought it was only fair after it saved me all of this money on my car.” However, another individual expressed trepidation, “I’m nervous about the scope of what these agents can do, and I’ve revoked a lot of access.” This reflects a broader sentiment of caution even alongside enthusiasm for new technological capabilities.

Why This Matters

Implications for the AI Landscape

The emergence of Moltbook signals a pivotal moment in the integration of AI into social frameworks. As these agents gain increasing autonomy, they challenge traditional notions of user-agent relationships, shifting the way technology might intersect with daily life.

“But there’s many details behind the scenes that people are not aware of.”

This paradigm shift necessitates ongoing discussions about ethics, security, and regulation as society navigates the uncharted waters of autonomous AI. Ensuring responsible development and deployment becomes paramount to harnessing the benefits of such groundbreaking innovations responsibly.

The Broader Context of AI Development

As AI technology continues to evolve rapidly, platforms like Moltbook is expected to likely serve as case studies in the potential and pitfalls of generative AI. Observing the interactions and developments in this social network can inform future frameworks, guidelines, and innovations in AI methodologies.

In summary, while the path forward is filled with potential, it also carries risks that experts and users must confront as AI agents interact with a broader array of systems and services.

FAQ

Your Questions Answered

For those curious about the dynamics of Moltbook, its implications for AI, and the intricacies of autonomous agents, the following FAQ section addresses common inquiries to provide a clearer understanding.