Key Takeaways

- Google AI’s NAI framework integrates accessibility into its core, enhancing usability.

- NAI employs multimodal models that process voice, text, and images simultaneously.

- A multi-agent system enables dynamic adaptation of user interfaces based on context.

- Collaboration with organizations ensures real-world applicability for users with disabilities.

- NAI aims not only to bridge the accessibility gap but also to benefit all users.

What We Know So Far

Overview of NAI

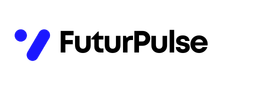

Google AI has unveiled a groundbreaking framework called Natively Adaptive Interfaces (NAI). This innovative system is designed to create accessible software through an agentic multimodal AI agent. NAI adapts applications to users’ abilities and contexts, aiming to significantly improve user experience.

Related image — Source: marktechpost.com — Original

As NAI integrates accessibility into its core architecture, it addresses the prevalent issue of accessibility being treated as an add-on rather than a fundamental aspect of software design. This integration promotes a more inclusive approach to user interface development.

Addressing the Accessibility Gap

One of the primary objectives of NAI is to tackle what is termed the ‘accessibility gap’. This gap often exists between the initiation of new features and their usability for people with disabilities. By focusing on making interfaces inherently adaptive, NAI seeks to bridge this divide and enhance overall usability.

To achieve this, NAI employs a multi-agent system capable of dynamically adjusting user interfaces based on real-time contextual feedback. Such a feature is vital in ensuring everyone can effectively interact with software.

Key Details and Context

More Details from the Release

NAI integrates accessibility into the core architecture of its software instead of treating it as a separate layer.

Google AI’s NAI framework aims to create accessible software through an agentic multimodal AI agent that adapts applications to users’ abilities and contexts.

Underlying Technology

The NAI framework is built on advanced multimodal models, such as Gemini. These models excel in processing various forms of media—voice, text, and images—simultaneously. This capability allows for a seamless interaction experience, benefiting a broader range of users.

Related image — Source: marktechpost.com — Original

In practical applications, NAI enables features such as interactive queries during content playback in accessible video applications. This augments the user experience and allows for greater engagement with the material presented.

Collaboration for Real-World Impact

The development of NAI has been bolstered by partnerships with well-respected organizations, including RIT/NTID and The Arc of the United States. Such collaborations ensure that the framework is not only theoretically robust but also practically applicable for users with diverse needs.

These piloted prototypes allow Google to tailor the NAI framework, focusing on real-world experiences and ensuring the technology can effectively serve all users, particularly those with disabilities.

What Happens Next

Future Developments

The ongoing development of NAI signifies a shift towards more inclusive technology design. Google’s commitment to accessibility and adaptability in UI design suggests that further enhancements and features is expected to continually emerge from this framework.

Related image — Source: marktechpost.com — Original

As the landscape of digital interfaces evolves, the integration of agentic multimodal systems is expected to likely become increasingly essential, ultimately leading to a more user-friendly technological environment for everyone.

Potential Challenges

While the potential benefits of NAI are significant, the implementation of such a framework does come with challenges. Ensuring compatibility across various platforms and devices remains a priority, and continuous feedback is expected to be crucial to address any issues that surface.

Moreover, as with any emerging technology, maintaining a focus on user privacy and data security is expected to be paramount to gain user trust and ensure the long-term success of these adaptive systems.

Why This Matters

Transforming Accessibility

Google AI’s introduction of NAI represents a pivotal moment in the realm of software accessibility. By embracing a framework that inherently understands and adapts to user needs, the technology giant sets a precedent for future developments in software design.

The emphasis on embedding accessibility as a core component rather than an afterthought signifies a fundamental shift in how technology can address real-world challenges, providing an inclusive digital environment.

Broad Implications

Not only is NAI expected to improve usability for individuals with disabilities, but it also promises to enhance the overall experience for all users. Such advancements can lead to wider user engagement, increased satisfaction, and a more democratized approach to technology.

By prioritizing adaptability and multimodal access, Google AI’s NAI framework heralds an era of more intuitive and responsive user interfaces, reflecting a commitment to inclusivity and innovation.

FAQ

Answers to Common Questions

The following answers address common queries surrounding the NAI framework:

- What is the main goal of Google AI’s NAI framework?

The NAI framework aims to create accessible software tailored to users’ abilities and contexts. - How does NAI improve user experience?

NAI allows for real-time adjustments in user interfaces, improving usability for everyone. - What technologies underpin the NAI framework?

NAI is built on multimodal models like Gemini, enabling seamless interaction with various media forms. - Who are the collaborators behind NAI development?

Google partnered with organizations like RIT/NTID and The Arc of the United States for NAI’s development.

Sources

- Primary source

- Google AI Introduces Natively Adaptive Interfaces (NAI): An Agentic Multimodal Accessibility Framework Built on Gemini for Adaptive UI Design

- Alibaba Open-Sources Zvec: An Embedded Vector Database Bringing SQLite-like Simplicity and High-Performance On-Device RAG to Edge Applications

- A Coding Implementation to Establish Rigorous Prompt Versioning and Regression Testing Workflows for Large Language Models using MLflow